In addition, I encountered import problems:

1、The page displays an error: Something went wrong during the file_import job execution,

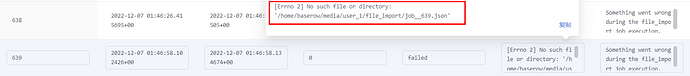

2、I check the information of the import task in the database:

3、

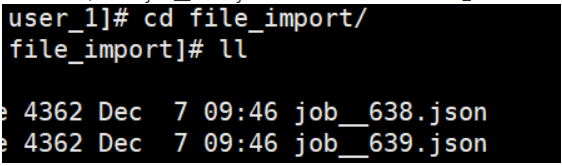

The “job__ 639. json” exists under “MEDIA_ROOT/user_id/file_import”:

Sometimes the import is successful, if it fails, it will succeed after several attempts.

@bram @nigel @Alex excuse me, I run Baserow to record various information of my class, so I need to use the function of importing files frequently, do you have any ideas on this question?

Update:

I seem to have found the reason! I now start two servers A and B, sharing the same Redis and Database. When I import files, server A’s backend receives interface requests:/api/database/tables/database/{database

_ Id}/async/, and “job__ {job_id}. json” is generated, But asynchronous tasks seem to be assigned to server B for execution, server B’s log will display:

==> exportworker.error <==

[2022-12-12 09:18:55,430: INFO/MainProcess] Task baserow.core.jobs.tasks.run_async_job[fabd16a6-ee86-432d-a383-5e899403d09f] received

[2022-12-12 09:18:55,459: FileNotFoundError: [Errno 2] No such file or directory: '/home/baserow/media/user_2/file_import/job__1076.json'

Is this problem caused by sharing Redis and database? Can you share your thoughts?My Supervisor Configuration:

[supervisord]

nodaemon = true

environment =

DJANGO_SETTINGS_MODULE='baserow.config.settings.base',

MEDIA_ROOT='/home/baserow/media',

DATABASE_HOST='142.468.0.00',

DATABASE_PASSWORD='',

SECRET_KEY='0ifhVlGHfxSVTY6mn0lzIwAuKsJNm9Xrdm1box10xnaaY4EklR8JFnVrEMp3CsWe',

DATABASE_NAME="baserow",

DATABASE_USER="baserow",

PRIVATE_BACKEND_URL='http://backend:8000', ---> (private IP of each server)

PUBLIC_WEB_FRONTEND_URL='http://10.416.77.000:3000', ---> (public web-frontend nginx url)

PUBLIC_BACKEND_URL='http://10.416.77.000:8000', ---> (public backend nginx url)

MEDIA_URL='http://10.416.77.000:8080/media/', ---> (media nginx url)

REDIS_HOST='142.328.0.00',

REDIS_PASSWORD='',

[program:gunicorn]

command = /home/baserow/python/virtual/env/bin/gunicorn -w 5 -b 0.0.0.0:8000 -k uvicorn.workers.UvicornWorker baserow.config.asgi:application --log-level=debug --chdir=/home/baserow/

stdout_logfile=/home/baserow/logs/backend.log

stderr_logfile=/home/baserow/logs/backend.error

user=baserow

[program:worker]

command=/home/baserow/python/virtual/env/bin/celery -A baserow worker -l INFO -Q celery -n worker%%h

stdout_logfile=/home/baserow/logs/worker.log

stderr_logfile=/home/baserow/logs/worker.error

user=baserow

[program:exportworker]

command=/home/baserow/python/virtual/env/bin/celery -A baserow worker -l INFO -Q export -n exportworker%%h

stdout_logfile=/home/baserow/logs/exportworker.log

stderr_logfile=/home/baserow/logs/exportworker.error

user=baserow

[program:beatworker]

directory=/home/baserow/python

command=/home/baserow/python/virtual/env/bin/celery -A baserow beat -l INFO -S redbeat.RedBeatScheduler

stdout_logfile=/home/baserow/logs/exportworker.log

stderr_logfile=/home/baserow/logs/exportworker.error

user=baserow

[program:nuxt]

directory = /home/baserow/web-frontend

command = node ./node_modules/.bin/nuxt start --hostname 196.542.15.00 --port 3000 --config-file ./config/nuxt.config.dev.js

stdout_logfile = /home/baserow/logs/frontend.log

stderr_logfile = /home/baserow/logs/frontend.error

user=baserow